While artificial intelligence (AI) has already transformed a myriad of industries, from healthcare and automotive to marketing and finance, its potential is now being put to the test in one of the blockchain industry’s most crucial areas — smart contract security.

Numerous tests have shown great potential for AI-based blockchain audits, but this nascent tech still lacks some important qualities inherent to human professionals — intuition, nuanced judgment and subject expertise.

My own organization, OpenZeppelin, recently conducted a series of experiments highlighting the value of AI in detecting vulnerabilities. This was done using OpenAI’s latest GPT-4 model to identify security issues in Solidity smart contracts. The code being tested comes from the Ethernaut smart contract hacking web game — designed to help auditors learn how to look for exploits. During the experiments, GPT-4 successfully identified vulnerabilities in 20 out of 28 challenges.

Related: Buckle up, Reddit: Closed APIs cost more than you’d expect

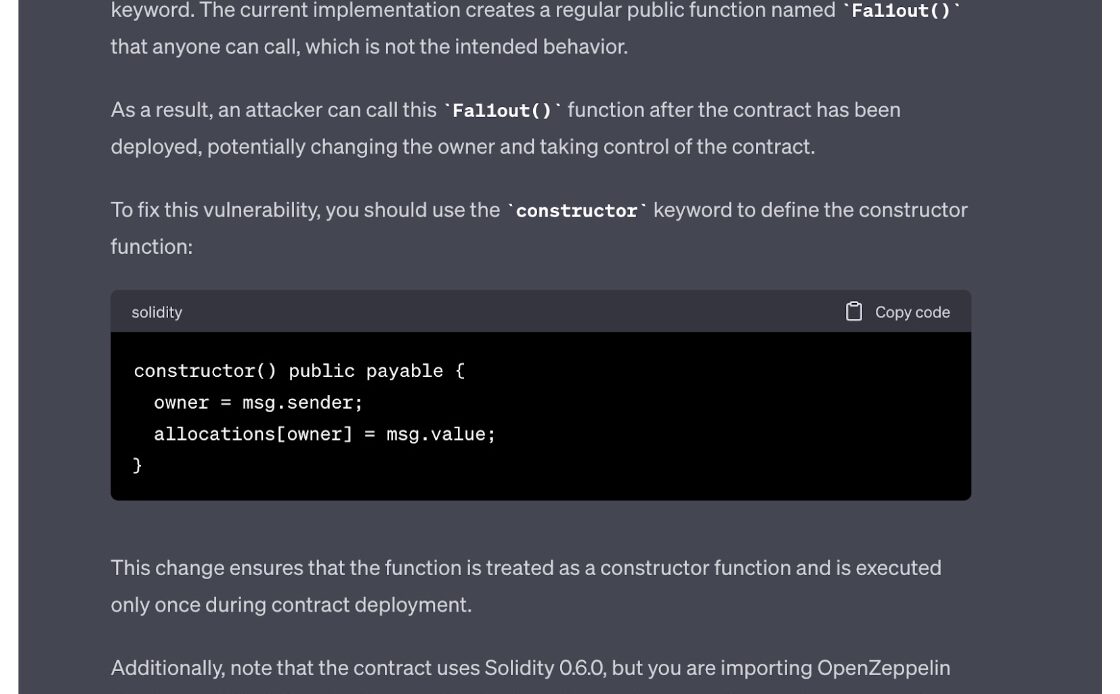

In some cases, simply providing the code and asking if the contract contained a vulnerability would produce accurate results, such as with the following naming issue with the constructor function:

At other times, the results were more mixed or outright poor. Sometimes the AI would need to be prompted with the correct response by providing a somewhat leading question, such as, “Can you change the library address in the previous contract?” At its worst, GPT-4 would fail to come up with a vulnerability, even when things were pretty clearly spelled out, as in, “Gate one and Gate two can be passed if you call the function from inside a constructor, how can you enter the GatekeeperTwo smart contract now?” At one point, the AI even invented a vulnerability that wasn’t actually present.

This highlights the current limitations of this technology. Still, GPT-4 has made notable strides over its predecessor, GPT-3.5, the large language model (LLM) utilized within OpenAI’s initial launch of ChatGPT. In December 2022, experiments with ChatGPT showed that the model could only successfully solve five out of 26 levels. Both GPT-4 and GPT-3.5 were trained on data up until September 2021 using reinforcement learning from human feedback, a technique that involves a human feedback loop to enhance a language model during training.

Coinbase carried out similar experiments, yielding a…

Click Here to Read the Full Original Article at Cointelegraph.com News…